Web Server Load-Balancing with HAProxy on Ubuntu 14.04

What is HAProxy?

HAProxy(High Availability Proxy) is an open-source load-balancer which can load balance any TCP service. HAProxy is a free, very fast and reliable solution that offers load-balancing, high-availability, and proxying for TCP and HTTP-based applications. It is particularly well suited for very high traffic web sites and powers many of the world's most visited ones.

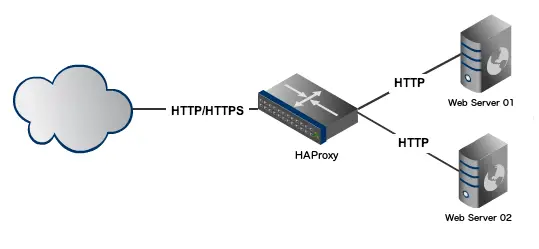

Since it's existence, it has become the de-facto standard open-source load-balancer. Although it does not advertise itself, but is used widely. Below is a basic diagram of how the setup looks like:

Installing HAProxy

I am using Ubuntu 14.04 and install it by:

apt-get install haproxy

You can check the version by:

haproxy -v

We need to enable HAProxy to be started by the init script /etc/default/haproxy. Set ENABLED option to 1 as:

ENABLED=1

To verify if this change is done properly, execute the init script of HAProxy without any parameters. You should see the following:

$ service haproxy <press_tab_key>

reload restart start status stop

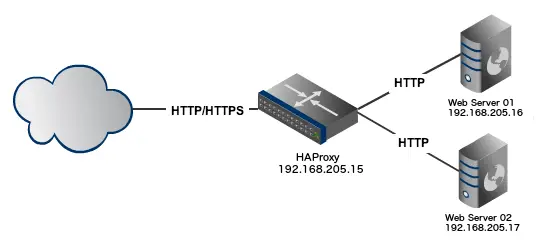

HAProxy is now installed. Let us now create a setup in which we have 2(two) Apache Web Server instances and 1(one) HAProxy instance. Below is the setup information:

We will be using three systems, spawned virtually through VirtualBox:

Instance 1 - Load Balancer

Hostname: haproxy

OS: Ubuntu

Private IP: 192.168.205.15

Instance 2 - Web Server 1

Hostname: webser01

OS: Ubuntu with LAMP

Private IP: 192.168.205.16

Instance 2 - Web Server 2

Hostname: webserver02

OS: Ubuntu with LAMP

Private IP: 192.168.205.17

Here is the diagram of how the setup looks like:

Let us now configure HAProxy.

Configuring HAProxy

Backup the original file by renaming it:

mv /etc/haproxy/haproxy.cfg{,.original}

We'll create our own haproxy.cfg file. Using your favorite text editor create the /etc/haproxy/haproxy.cfg file as:

global

log /dev/log local0

log 127.0.0.1 local1 notice

maxconn 4096

user haproxy

group haproxy

daemon

defaults

log global

mode http

option httplog

option dontlognull

retries 3

option redispatch

maxconn 2000

contimeout 5000

clitimeout 50000

srvtimeout 50000

listen webfarm 0.0.0.0:80

mode http

stats enable

stats uri /haproxy?stats

balance roundrobin

option httpclose

option forwardfor

server webserver01 192.168.205.16:80 check

server webserver02 192.168.205.17:80 check

Explanation:

global

log /dev/log local0

log 127.0.0.1 local1 notice

maxconn 4096

user haproxy

group haproxy

daemon

The log directive mentions a syslog server to which log messages will be sent.

The maxconn directive specifies the number of concurrent connections on the front-end. The default value is 2000 and should be tuned according to your system's configuration.

The user and group directives changes the HAProxy process to the specified user/group. These shouldn't be changed.

defaults

log global

mode http

option httplog

option dontlognull

retries 3

option redispatch

maxconn 2000

contimeout 5000

clitimeout 50000

srvtimeout 50000

The above section has the default values. The option redispatch enables session redistribution in case of connection failures. So session stickness is overriden if a web server instance goes down.

The retries directive sets the number of retries to perform on a web server instance after a connection failure.

The values to be modified are the various timeout directives. The contimeout option specifies the maximum time to wait for a connection attempt to a web server instance to succeed.

The clitimeout and srvtimeout apply when the client or server is expected to acknowledge or send data during the TCP process. HAProxy recommends setting the client and server timeouts to the same value.

listen webfarm 0.0.0.0:80 mode http stats enable stats uri /haproxy?stats balance roundrobin option httpclose option forwardfor server webserver01 192.168.205.16:80 check server webserver02 192.168.205.17:80 check

Above block contains configuration for both the frontend and backend. We are configuring HAProxy to listen on port 80 for webfarm which is just a name for identifying an application.

The stats directives enable the connection statistics page. This page can viewed with the URL mentioned in stats uri so in this case, it is http://192.168.205.15/haproxy?stats a demo of this page can be viewed here.

The balance directive specifies the load balancing algorithm to use. Algorithm options available are:

- Round Robin (roundrobin),

- Static Round Robin (static-rr),

- Least Connections (leastconn),

- Source (source),

- URI (uri) and

- URL parameter (url_param).

Information about each algorithm can be obtained from the official documentation.

The server directive declares a backend server, the syntax is:

server <server_name> <server_address>[:port] [param*]

The name we mention here will appear in logs and alerts. There are some more parameters supported by this directive and we'll be using the check parameter in this article. The check option enables health checks on the web server instance otherwise, the web server instance is ?always considered available.

Once you're done configuring start the HAProxy service:

sudo service haproxy start

Testing Load-Balancing and Fail-over

We will append the server name in both the default index.html file located by default at /var/www/index.html

On the Instance 2 - Web Server 1 (webserver01 with IP- 192.168.205.16), append below line as:

sudo sh -c "echo \<h1\>Hostname: webserver01 \(192.168.205.16\)\<\/h1\> >> /var/www/index.html"

On the Instance 3 - Web Server 2 (webserver02 with IP- 192.168.205.17), append below line as:

sudo sh -c "echo \<h1\>Hostname: webserver02 \(192.168.205.17\)\<\/h1\> >> /var/www/index.html"

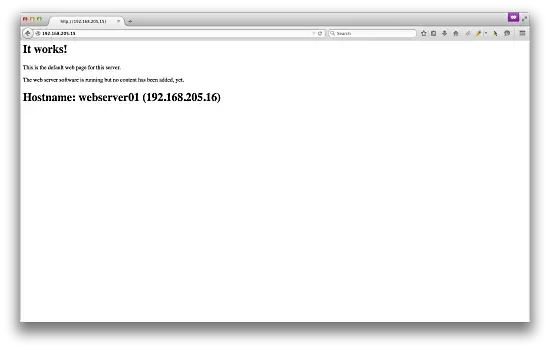

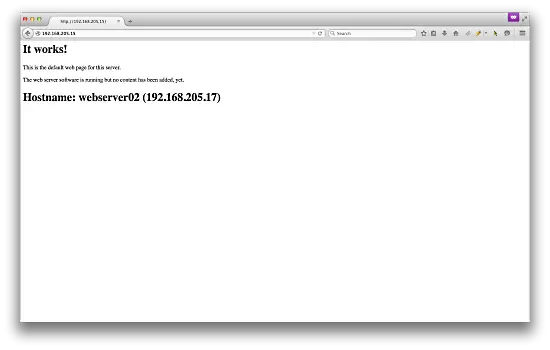

Now open up the web browser on local machine and browse through the haproxy IP i.e. http://192.168.205.15

Each time you refresh the tab, you'll see the load is being distributed to each web server. Below is screenshot of my browser:

For the first time when I visit http://192.168.205.15 , I get:

And for the second time, i.e. when I refresh the page, I get:

You can also check the haproxy stats by visiting http://192.168.205.15/haproxy?stats

There's more that you can do to this setup. Some ideas include:

- take one or both web servers offline to test what happens when you access HAProxy

- configure HAProxy to serve a custom maintenance page

- configure the web interface so you can visually monitor HAProxy statistics

- change the scheduler to something other than round-robin

- configure prioritization/weights for particular servers

That's all!