How to Install and Configure Suricata IDS alongside Elastic Stack on Debian 12

On this page

- Prerequisites

- PART 1

- Step 1 - Install Suricata

- Step 2 - Configure Suricata

- Step 3 - Configure Suricata Rules

- Step 4 - Validate Suricata Configuration

- Step 5 - Running Suricata

- Step 6 - Testing Suricata Rules

- PART 2

- Step 7 - Install Elasticsearch

- Step 8 - Configure Elasticsearch

- Step 9 - Install and Configure Kibana

- Step 10 - Install and Configure Filebeat

- Step 11 - Accessing Kibana Dashboard

- Step 12 - Managing Kibana Dashboards

- Conclusion

Suricata is a Network Monitoring tool that examines and processes every packet of internet traffic that flows through your server. It can generate log events, trigger alerts, and drop traffic upon detecting any suspicious activity.

You can either install Suricata on a single machine to monitor its traffic or deploy it on a gateway host to scan all incoming and outgoing traffic from other servers connected to it. You can combine Suricata with Elasticsearch, Kibana, and Filebeat to create a Security Information and Event Management(SIEM) tool.

In this tutorial, you will install Suricata IDS along with ElasticStack on a Debian 12 server. The various components of the stack are:

- Elasticsearch to store, index, correlate and search the security events from the server.

- Kibana to display the logs stored in Elasticsearch.

- Filebeat to parse Suricata's

eve.jsonlog file and send each event to Elasticsearch for processing. - Suricata to scan the network traffic for suspicious events and drop the invalid packets.

The tutorial is divided into two parts, the first part will deal with installing and configuring Suricata, and the second part will deal with installing and configuring Elastic Stack.

We will install Suricata and the Elastic stack on different servers for our tutorial.

Prerequisites

-

The servers hosting the Elastic Stack and Suricata should have a minimum of 4GB RAM and 2 CPU cores.

-

The servers should be able to communicate with each other using private IP addresses.

-

The servers should be running Debian 12 with a non-root sudo user.

-

If you want to access Kibana dashboards from everywhere, set up a domain (

kibana.example.com) pointing to the server where Elasticsearch will be installed. -

Install essential packages on both servers. Some of them may already be installed.

$ sudo apt install wget curl nano ufw software-properties-common dirmngr apt-transport-https gnupg2 ca-certificates lsb-release debian-archive-keyring unzip -y

-

Make sure everything is updated on both servers.

$ sudo apt update

PART 1

Step 1 - Install Suricata

Suricata is available in the Debian official repositories. Install it using the following command.

$ sudo apt install suricata

Suricata service is automatically enabled and started. Before proceeding ahead, stop the Suricata service since we need to configure it first.

$ sudo systemctl stop suricata

Step 2 - Configure Suricata

Suricata stores its configuration in the /etc/suricata/suricata.yaml file. The default mode for Suricata is the IDS (Intrusion Detection System) Mode, where the traffic is only logged and not stopped. If you are new to Suricata, you should leave the mode unchanged. Once you have configured it and learned more, you can turn on the IPS (Intrusion Prevention System) mode.

Enable Community ID

The Community ID field makes it easier to correlate data between records generated by different monitoring tools. Since we will use Suricata with Elasticsearch, enabling Community ID can be useful.

Open the file /etc/suricata/suricata.yaml for editing.

$ sudo nano /etc/suricata/suricata.yaml

Locate the line # Community Flow ID and set the value of the variable community-id to true.

. . .

# Community Flow ID

# Adds a 'community_id' field to EVE records. These are meant to give

# records a predictable flow ID that can be used to match records to

# output of other tools such as Zeek (Bro).

#

# Takes a 'seed' that needs to be same across sensors and tools

# to make the id less predictable.

# enable/disable the community id feature.

community-id: true

. . .

Save the file by pressing Ctrl + X and entering Y when prompted.

Now, your events will carry an ID like 1:S+3BA2UmrHK0Pk+u3XH78GAFTtQ= that you can use to match datasets across different monitoring tools.

Select Network Interface

The default Suricata configuration file inspects traffic on the eth0 device/network interface. If your server uses a different network interface, you will need to update that in the configuration.

Check the device name of your network interface using the following command.

$ ip -p -j route show default

You will receive an output like the following.

[ {

"dst": "default",

"gateway": "159.223.208.1",

"dev": "eth0",

"protocol": "static",

"flags": [ ]

} ]

The dev variable refers to the networking device. In our output, it shows eth0 as the networking device. Your output may be different depending on your system.

Now that you know your device name open the configuration file.

$ sudo nano /etc/suricata/suricata.yaml

Find the line af-packet: around line number 580. Under it, set the value of the variable interface to the device name for your system.

# Linux high speed capture support

af-packet:

- interface: eth0

# Number of receive threads. "auto" uses the number of cores

#threads: auto

# Default clusterid. AF_PACKET will load balance packets based on flow.

cluster-id: 99

. . .

If you want to add additional interfaces, you can do so by adding them at the bottom of the af-packet section at around line 650.

To add a new interface, insert it just above the - interface: default section as shown below.

# For eBPF and XDP setup including bypass, filter and load balancing, please

# see doc/userguide/capture-hardware/ebpf-xdp.rst for more info.

- interface: enp0s1

cluster-id: 98

...

- interface: default

#threads: auto

#use-mmap: no

#tpacket-v3: yes

We have added a new interface enp0s1 and a unique value for the cluster-id variable in our example. You need to include a unique cluster ID with every interface you add.

Find the line pcap: and under it, set the value of the variable interface to the device name for your system.

# Cross platform libpcap capture support

pcap:

- interface: eth0

# On Linux, pcap will try to use mmap'ed capture and will use "buffer-size"

# as total memory used by the ring. So set this to something bigger

# than 1% of your bandwidth.

To add a new interface just like before, insert it just above the - interface: default section as shown below.

- interface: enp0s1

# Put default values here

- interface: default

#checksum-checks: auto

Once you are finished, save the file by pressing Ctrl + X and entering Y when prompted.

Step 3 - Configure Suricata Rules

Suricata, by default, only uses a limited set of rules to detect network traffic. You can add more rulesets from external providers using a tool called suricata-update. Run the following command to include additional rules.

$ sudo suricata-update -o /etc/suricata/rules 4/10/2023 -- 14:12:05 - <Info> -- Using data-directory /var/lib/suricata. 4/10/2023 -- 14:12:05 - <Info> -- Using Suricata configuration /etc/suricata/suricata.yaml 4/10/2023 -- 14:12:05 - <Info> -- Using /etc/suricata/rules for Suricata provided rules. ..... 4/10/2023 -- 14:12:05 - <Info> -- No sources configured, will use Emerging Threats Open 4/10/2023 -- 14:12:05 - <Info> -- Fetching https://rules.emergingthreats.net/open/suricata-6.0.10/emerging.rules.tar.gz. 100% - 4073339/4073339 ..... 4/10/2023 -- 14:12:09 - <Info> -- Writing rules to /etc/suricata/rules/suricata.rules: total: 45058; enabled: 35175; added: 45058; removed 0; modified: 0 4/10/2023 -- 14:12:10 - <Info> -- Writing /etc/suricata/rules/classification.config 4/10/2023 -- 14:12:10 - <Info> -- Testing with suricata -T. 4/10/2023 -- 14:12:33 - <Info> -- Done.

The -o /etc/suricata/rules portion of the command instructs the update tool to save the rules to the /etc/suricata/rules directory. This parameter is important otherwise you will get the following error during validation.

<Warning> - [ERRCODE: SC_ERR_NO_RULES(42)] - No rule files match the pattern /etc/suricata/rules/suricata.rules

Add Ruleset Providers

You can expand Suricata's rules by adding more providers. It can fetch rules from a variety of free and commercial providers.

You can list the default provider list by using the following command.

$ sudo suricata-update list-sources

For example, if you want to include the tgreen/hunting ruleset, you can enable it with the following command.

$ sudo suricata-update enable-source tgreen/hunting 4/10/2023 -- 14:24:26 - <Info> -- Using data-directory /var/lib/suricata. 4/10/2023 -- 14:24:26 - <Info> -- Using Suricata configuration /etc/suricata/suricata.yaml 4/10/2023 -- 14:24:26 - <Info> -- Using /etc/suricata/rules for Suricata provided rules. 4/10/2023 -- 14:24:26 - <Info> -- Found Suricata version 6.0.10 at /usr/bin/suricata. 4/10/2023 -- 14:24:26 - <Info> -- Creating directory /var/lib/suricata/update/sources 4/10/2023 -- 14:24:26 - <Info> -- Enabling default source et/open 4/10/2023 -- 14:24:26 - <Info> -- Source tgreen/hunting enabled

Run the suricata-update command again to download and update the new rules. Suricata, by default, can process any rule changes without restarting.

Step 4 - Validate Suricata Configuration

Suricata ships with a validation tool to check the configuration file and rules for errors. Run the following command to run the validation tool.

$ sudo suricata -T -c /etc/suricata/suricata.yaml -v 4/10/2023 -- 14:24:43 - <Info> - Running suricata under test mode 4/10/2023 -- 14:24:43 - <Notice> - This is Suricata version 6.0.10 RELEASE running in SYSTEM mode 4/10/2023 -- 14:24:43 - <Info> - CPUs/cores online: 2 4/10/2023 -- 14:24:43 - <Info> - fast output device (regular) initialized: fast.log 4/10/2023 -- 14:24:43 - <Info> - eve-log output device (regular) initialized: eve.json 4/10/2023 -- 14:24:43 - <Info> - stats output device (regular) initialized: stats.log 4/10/2023 -- 14:24:53 - <Info> - 1 rule files processed. 35175 rules successfully loaded, 0 rules failed 4/10/2023 -- 14:24:53 - <Info> - Threshold config parsed: 0 rule(s) found 4/10/2023 -- 14:24:54 - <Info> - 35178 signatures processed. 1255 are IP-only rules, 5282 are inspecting packet payload, 28436 inspect application layer, 108 are decoder event only 4/10/2023 -- 14:25:07 - <Notice> - Configuration provided was successfully loaded. Exiting. 4/10/2023 -- 14:25:07 - <Info> - cleaning up signature grouping structure... complete

The -T flag instructs Suricata to run in testing mode, the -c flag configures the location of the configuration file, and the -v flag prints the verbose output of the command. Depending upon your system configuration and the number of rules added, the command can take a few minutes to finish.

Step 5 - Running Suricata

Now that Suricata is configured and set up, it is time to run the application.

$ sudo systemctl start suricata

Check the status of the process.

$ sudo systemctl status suricata

You should see the following output if everything is working correctly.

? suricata.service - Suricata IDS/IDP daemon

Loaded: loaded (/lib/systemd/system/suricata.service; enabled; preset: enabled)

Active: active (running) since Wed 2023-10-04 14:25:49 UTC; 6s ago

Docs: man:suricata(8)

man:suricatasc(8)

https://suricata-ids.org/docs/

Process: 1283 ExecStart=/usr/bin/suricata -D --af-packet -c /etc/suricata/suricata.yaml --pidfile /run/suricata.pid (code=exited, status=0/SUCCESS)

Main PID: 1284 (Suricata-Main)

Tasks: 1 (limit: 4652)

Memory: 211.7M

CPU: 6.132s

CGroup: /system.slice/suricata.service

??1284 /usr/bin/suricata -D --af-packet -c /etc/suricata/suricata.yaml --pidfile /run/suricata.pid

Oct 04 14:25:49 suricata systemd[1]: Starting suricata.service - Suricata IDS/IDP daemon...

Oct 04 14:25:49 suricata suricata[1283]: 4/10/2023 -- 14:25:49 - <Notice> - This is Suricata version 6.0.10 RELEASE running in SYSTEM mode

Oct 04 14:25:49 suricata systemd[1]: Started suricata.service - Suricata IDS/IDP daemon.

The process can take a few minutes to finish parsing all the rules. Therefore, the above status check is not a complete indication of whether Suricata is up and ready. You can monitor the log file for that using the following command.

$ sudo tail -f /var/log/suricata/suricata.log

If you see the following line in the log file, it means Suricata is running and ready to monitor network traffic. Exit the tail command by pressing the CTRL+C keys.

4/10/2023 -- 14:26:12 - <Info> - All AFP capture threads are running.

Step 6 - Testing Suricata Rules

We will check whether Suricata is detecting any suspicious traffic. The Suricata guide recommends testing the ET Open rule number 2100498 using the following command.

$ curl http://testmynids.org/uid/index.html

You will get the following response.

uid=0(root) gid=0(root) groups=0(root)

The above command pretends to return the output of the id command that can be run on a compromised system. To test whether Suricata detected the traffic, you need to check the log file using the specified rule number.

$ grep 2100498 /var/log/suricata/fast.log

If your request used IPv6, you should see the following output.

10/04/2023-14:26:37.511168 [**] [1:2100498:7] GPL ATTACK_RESPONSE id check returned root [**] [Classification: Potentially Bad Traffic] [Priority: 2] {TCP} 2600:9000:23d0:a200:0018:30b3:e400:93a1:80 -> 2a03:b0c0:0002:00d0:0000:0000:0e1f:c001:53568

If your request used IPv4, you would see the following output.

10/04/2023-14:26:37.511168 [**] [1:2100498:7] GPL ATTACK_RESPONSE id check returned root [**] [Classification: Potentially Bad Traffic] [Priority: 2] {TCP} 108.158.221.5:80 -> 95.179.185.42:36364

Suricata also logs events to the /var/log/suricata/eve.log file using JSON format. To read and interpret those rules, you need to install jq which is outside the scope of this tutorial.

PART 2

We are done with part one of the tutorial, where we installed Suricata and tested it. The next part involves installing the ELK stack and setting it up to visualize Suricata and its logs. Part two of the tutorial is supposed to be done on the second server unless otherwise specified.

Step 7 - Install Elasticsearch

The first step in installing Elasticsearch involves adding the Elastic GPG key to your server.

$ wget -qO - https://artifacts.elastic.co/GPG-KEY-elasticsearch | sudo gpg --dearmor -o /usr/share/keyrings/elasticsearch-keyring.gpg

Create a repository for the Elasticsearch package by creating the file /etc/apt/sources.list.d/elastic-7.x.list.

$ echo "deb [signed-by=/usr/share/keyrings/elasticsearch-keyring.gpg] https://artifacts.elastic.co/packages/8.x/apt stable main" | sudo tee /etc/apt/sources.list.d/elastic-8.x.list

Update your system's repository list.

$ sudo apt update

Install Elasticsearch and Kibana.

$ sudo apt install elasticsearch

You will get the following output on Elasticsearch's installation.

--------------------------- Security autoconfiguration information ------------------------------ Authentication and authorization are enabled. TLS for the transport and HTTP layers is enabled and configured. The generated password for the elastic built-in superuser is : IuRTjJr+=NqIClxZwKBn If this node should join an existing cluster, you can reconfigure this with '/usr/share/elasticsearch/bin/elasticsearch-reconfigure-node --enrollment-token <token-here>' after creating an enrollment token on your existing cluster. You can complete the following actions at any time: Reset the password of the elastic built-in superuser with '/usr/share/elasticsearch/bin/elasticsearch-reset-password -u elastic'. Generate an enrollment token for Kibana instances with '/usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s kibana'. Generate an enrollment token for Elasticsearch nodes with '/usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s node'. -------------------------------------------------------------------------------------------------

We will use this information later on.

Locate your server's private IP address using the following command.

$ ip -brief address show lo UNKNOWN 127.0.0.1/8 ::1/128 eth0 UP 159.223.220.228/20 10.18.0.5/16 2a03:b0c0:2:d0::e0e:c001/64 fe80::841e:feff:fee4:e653/64 eth1 UP 10.133.0.2/16 fe80::d865:d5ff:fe29:b50f/64

Note down the private IP of your server (10.133.0.2 in this case). We will refer to it as your_private_IP. The public IP address of the server (159.223.220.228) will be referred as your_public_IP in the remaining tutorial. Also, note the name of the network interface of your server, eth1.

Step 8 - Configure Elasticsearch

Elasticsearch stores its configuration in the /etc/elasticsearch/elasticsearch.yml file. Open the file for editing.

$ sudo nano /etc/elasticsearch/elasticsearch.yml

Elasticsearch only accepts local connections by default. We need to change it so that Kibana can access it over the private IP address.

Find the line #network.host: 192.168.0.1 and add the following line right below it, as shown below.

# By default Elasticsearch is only accessible on localhost. Set a different # address here to expose this node on the network: # #network.host: 192.168.0.1 network.bind_host: ["127.0.0.1", "your_private_IP"] # # By default Elasticsearch listens for HTTP traffic on the first free port it # finds starting at 9200. Set a specific HTTP port here:

This will ensure that Elastic can still accept local connections while being available to Kibana over the private IP address.

The next step is to turn on some security features and ensure that Elastic is configured to run on a single node. If you are going to use multiple Elastic search nodes, skip both the changes below and save the file.

To do that, add the following line at the end of the file.

. . . discovery.type: single-node

Also, comment out the following line by adding a hash (#) in front of it.

#cluster.initial_master_nodes: ["kibana"]

Once you are finished, save the file by pressing Ctrl + X and entering Y when prompted.

Configure Firewall

Add the proper firewall rules for Elasticsearch so that it is accessible via the private network.

$ sudo ufw allow in on eth1 $ sudo ufw allow out on eth1

Make sure you choose the interface name in the first command like the one you got from step 7.

Start Elasticsearch

Reload the service daemon.

$ sudo systemctl daemon-reload

Enable the Elasticsearch service.

$ sudo systemctl enable elasticsearch

Now that you have configured Elasticsearch, it is time to start the service.

$ sudo systemctl start elasticsearch

Check the status of the service.

$ sudo systemctl status elasticsearch

? elasticsearch.service - Elasticsearch

Loaded: loaded (/lib/systemd/system/elasticsearch.service; enabled; preset: enabled)

Active: active (running) since Wed 2023-10-04 14:30:55 UTC; 8s ago

Docs: https://www.elastic.co

Main PID: 1731 (java)

Tasks: 71 (limit: 4652)

Memory: 2.3G

CPU: 44.355s

CGroup: /system.slice/elasticsearch.service

Create Elasticsearch Passwords

After enabling the security setting of Elasticsearch, the next step is to generate the password for the Elasticsearch superuser. The default password was provided during the installation which you can use but it is recommended to modify it.

Run the following command to reset the Elasticsearch password. Choose a strong password.

$ sudo /usr/share/elasticsearch/bin/elasticsearch-reset-password -u elastic -i This tool will reset the password of the [elastic] user. You will be prompted to enter the password. Please confirm that you would like to continue [y/N]y Enter password for [elastic]: <ENTER-PASSWORD> Re-enter password for [elastic]: <CONFIRM-PASSWORD> Password for the [elastic] user successfully reset.

Now, let us test if Elasticsearch responds to queries.

$ sudo curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic https://localhost:9200

Enter host password for user 'elastic':

{

"name" : "kibana",

"cluster_name" : "elasticsearch",

"cluster_uuid" : "KGYx4poLSxKhPyOlYrMq1g",

"version" : {

"number" : "8.10.2",

"build_flavor" : "default",

"build_type" : "deb",

"build_hash" : "6d20dd8ce62365be9b1aca96427de4622e970e9e",

"build_date" : "2023-09-19T08:16:24.564900370Z",

"build_snapshot" : false,

"lucene_version" : "9.7.0",

"minimum_wire_compatibility_version" : "7.17.0",

"minimum_index_compatibility_version" : "7.0.0"

},

"tagline" : "You Know, for Search"

}

This confirms that Elasticsearch is fully functional and running smoothly.

Step 9 - Install and Configure Kibana

Install Kibana.

$ sudo apt install kibana

The first step in configuring Kibana is to enable the xpack security function by generating secret keys. Kibana uses these secret keys to store data in Elasticsearch. The utility to generate secret keys can be accessed from the /usr/share/kibana/bin directory.

$ sudo /usr/share/kibana/bin/kibana-encryption-keys generate -q --force

The -q flag suppresses the command instructions, and the --force flag ensures fresh secrets are generated. You will receive an output like the following.

xpack.encryptedSavedObjects.encryptionKey: 248eb61d444215a6e710f6d1d53cd803 xpack.reporting.encryptionKey: aecd17bf4f82953739a9e2a9fcad1891 xpack.security.encryptionKey: 2d733ae5f8ed5f15efd75c6d08373f36

Copy the output. Open Kibana's configuration file at /etc/kibana/kibana.yml for editing.

$ sudo nano /etc/kibana/kibana.yml

Paste the code from the previous command at the end of the file.

. . . # Maximum number of documents loaded by each shard to generate autocomplete suggestions. # This value must be a whole number greater than zero. Defaults to 100_000 #unifiedSearch.autocomplete.valueSuggestions.terminateAfter: 100000 xpack.encryptedSavedObjects.encryptionKey: 3ff21c6daf52ab73e932576c2e981711 xpack.reporting.encryptionKey: edf9c3863ae339bfbd48c713efebcfe9 xpack.security.encryptionKey: 7841fd0c4097987a16c215d9429daec1

Copy the CA certificate file /etc/elasticsearch/certs/http_ca.crt to the /etc/kibana directory.

$ sudo cp /etc/elasticsearch/certs/http_ca.crt /etc/kibana/

Configure Kibana Host

Kibana needs to be configured so that it's accessible on the server's private IP address. Find the line #server.host: "localhost" in the file and add the following line right below it as shown.

# Kibana is served by a back end server. This setting specifies the port to use. #server.port: 5601 # Specifies the address to which the Kibana server will bind. IP addresses and host names are both valid values. # The default is 'localhost', which usually means remote machines will not be able to connect. # To allow connections from remote users, set this parameter to a non-loopback address. #server.host: "localhost" server.host: "your_private_IP"

Turn off Telemetry

Kibana sends data back to their servers by default. This can affect performance and also is a privacy risk. Therefore, you should turn off Telemetry. Add the following code at the end of the file to turn Telemetry off. The first setting turns off Telemetry and the second setting disallows overwriting the first setting from the Advanced Settings section in Kibana.

telemetry.optIn: false telemetry.allowChangingOptInStatus: false

Once you are finished, save the file by pressing Ctrl + X and entering Y when prompted.

Configure SSL

Find the variable elasticsearch.ssl.certificateAuthorities and uncomment it and change its value as shown below.

elasticsearch.ssl.certificateAuthorities: [ "/etc/kibana/http_ca.crt" ]

Configure Kibana Access

The next step is to generate an enrollment token which we will use later on to log into the Kibana web interface.

$ sudo /usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s kibana eyJ2ZXIiOiI4LjEwLjIiLCJhZHIiOlsiMTU5LjIyMy4yMjAuMjI4OjkyMDAiXSwiZmdyIjoiOGMyYTcyYmUwMDg5NTJlOGMxMWUwNDgzYjE2OTcwOTMxZWZlNzYyMDAwNzhhOGMwNTNmNWU0NGJiY2U4NzcwMSIsImtleSI6IlQ5eE0tNG9CUWZDaGdaakUwbFAzOk9QTU5uWVRnUWppU3lvU0huOUoyMHcifQ==

Starting Kibana

Now that you have configured secure access and networking for Kibana start and enable the process.

$ sudo systemctl enable kibana --now

Check the status to see if it is running.

$ sudo systemctl status kibana

? kibana.service - Kibana

Loaded: loaded (/lib/systemd/system/kibana.service; enabled; preset: enabled)

Active: active (running) since Wed 2023-10-04 15:27:28 UTC; 9s ago

Docs: https://www.elastic.co

Main PID: 2686 (node)

Tasks: 11 (limit: 4652)

Memory: 241.5M

CPU: 9.902s

CGroup: /system.slice/kibana.service

??2686 /usr/share/kibana/bin/../node/bin/node /usr/share/kibana/bin/../src/cli/dist

Oct 04 15:27:28 kibana systemd[1]: Started kibana.service - Kibana.

Step 10 - Install and Configure Filebeat

It is important to note that we will be installing Filebeat on the Suricata server. So switch back to it and add the Elastic GPG key to get started.

$ wget -qO - https://artifacts.elastic.co/GPG-KEY-elasticsearch | sudo gpg --dearmor -o /usr/share/keyrings/elasticsearch-keyring.gpg

Create the elastic repository.

$ echo "deb [signed-by=/usr/share/keyrings/elasticsearch-keyring.gpg] https://artifacts.elastic.co/packages/8.x/apt stable main" | sudo tee /etc/apt/sources.list.d/elastic-8.x.list

Update the system repository list.

$ sudo apt update

Save the file by pressing Ctrl + X and entering Y when prompted.

Install Filebeat.

$ sudo apt install filebeat

Before we configure Filebeat, we need to copy the http_ca.crt file from the Elasticsearch server over to the Filebeat server. Run the following command on the Filebeat server.

$ scp username@your_public_ip:/etc/elasticsearch/certs/http_ca.crt /etc/filebeat

Filebeat stores its configuration in the /etc/filebeat/filebeat.yml file. Open it for editing.

$ sudo nano /etc/filebeat/filebeat.yml

The first thing you need to do is connect it to Kibana's dashboard. Find the line #host: "localhost:5601" in the Kibana section and add the following lines right below it as shown.

. . . # Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API. # This requires a Kibana endpoint configuration. setup.kibana: # Kibana Host # Scheme and port can be left out and will be set to the default (http and 5601) # In case you specify and additional path, the scheme is required: http://localhost:5601/path # IPv6 addresses should always be defined as: https://[2001:db8::1]:5601 #host: "localhost:5601" host: "your_private_IP:5601" protocol: "http" ssl.enabled: true ssl.certificate_authorities: ["/etc/filebeat/http_ca.crt"] . . .

Next, find the Elasticsearch Output section of the file and edit the values of hosts, protocol, username, and password as shown below. For the username, choose elastic as the value, and for the password, use the value generated in step 8 of this tutorial.

output.elasticsearch: # Array of hosts to connect to. hosts: ["your_private_IP:9200"] # Protocol - either `http` (default) or `https`. protocol: "https" # Authentication credentials - either API key or username/password. #api_key: "id:api_key" username: "elastic" password: "bd1YJfhSa8RC8SMvTIwg" ssl.certificate_authorities: ["/etc/filebeat/http_ca.crt"] ssl.verification_mode: full . . .

Add the following line at the bottom of the file.

setup.ilm.overwrite: true

Once you are finished, save the file by pressing Ctrl + X and entering Y when prompted. There is one more step involved in making sure Filebeat connects to Elasticsearch. We need to pass Elasticsearch's SSL information to Filebeat for it to be able to connect.

Test the connection from the Filebeat to the Elasticsearch server. You will be asked for your Elasticsearch password.

$ curl -v --cacert /etc/filebeat/http_ca.crt https://your_private_ip:9200 -u elastic

You will get the following output.

Enter host password for user 'elastic':

* Trying 10.133.0.2:9200...

* Connected to 10.133.0.2 (10.133.0.2) port 9200 (#0)

* ALPN: offers h2,http/1.1

* TLSv1.3 (OUT), TLS handshake, Client hello (1):

* CAfile: /etc/filebeat/http_ca.crt

* CApath: /etc/ssl/certs

* TLSv1.3 (IN), TLS handshake, Server hello (2):

* TLSv1.3 (IN), TLS handshake, Encrypted Extensions (8):

* TLSv1.3 (IN), TLS handshake, Certificate (11):

* TLSv1.3 (IN), TLS handshake, CERT verify (15):

* TLSv1.3 (IN), TLS handshake, Finished (20):

* TLSv1.3 (OUT), TLS change cipher, Change cipher spec (1):

* TLSv1.3 (OUT), TLS handshake, Finished (20):

* SSL connection using TLSv1.3 / TLS_AES_256_GCM_SHA384

* ALPN: server did not agree on a protocol. Uses default.

* Server certificate:

* subject: CN=kibana

* start date: Oct 4 14:28:33 2023 GMT

* expire date: Oct 3 14:28:33 2025 GMT

* subjectAltName: host "10.133.0.2" matched cert's IP address!

* issuer: CN=Elasticsearch security auto-configuration HTTP CA

* SSL certificate verify ok.

* using HTTP/1.x

* Server auth using Basic with user 'elastic'

> GET / HTTP/1.1

> Host: 10.133.0.2:9200

> Authorization: Basic ZWxhc3RpYzpsaWZlc3Vja3M2NjIwMDI=

> User-Agent: curl/7.88.1

> Accept: */*

>

* TLSv1.3 (IN), TLS handshake, Newsession Ticket (4):

< HTTP/1.1 200 OK

< X-elastic-product: Elasticsearch

< content-type: application/json

< content-length: 530

<

{

"name" : "kibana",

"cluster_name" : "elasticsearch",

"cluster_uuid" : "KGYx4poLSxKhPyOlYrMq1g",

"version" : {

"number" : "8.10.2",

"build_flavor" : "default",

"build_type" : "deb",

"build_hash" : "6d20dd8ce62365be9b1aca96427de4622e970e9e",

"build_date" : "2023-09-19T08:16:24.564900370Z",

"build_snapshot" : false,

"lucene_version" : "9.7.0",

"minimum_wire_compatibility_version" : "7.17.0",

"minimum_index_compatibility_version" : "7.0.0"

},

"tagline" : "You Know, for Search"

}

* Connection #0 to host 10.133.0.2 left intact

Next, enable Filebeat's built-in Suricata module.

$ sudo filebeat modules enable suricata

Open the /etc/filebeat/modules.d/suricata.yml file for editing.

$ sudo nano /etc/filebeat/modules.d/suricata.yml

Edit the file as shown below. You need to change the value of enabled variable to true. Also, uncomment the variable var.paths and set its value as shown.

# Module: suricata

# Docs: https://www.elastic.co/guide/en/beats/filebeat/8.10/filebeat-module-suricata.html

- module: suricata

# All logs

eve:

enabled: true

# Set custom paths for the log files. If left empty,

# Filebeat will choose the paths depending on your OS.

var.paths: ["/var/log/suricata/eve.json"]

Once you are finished, save the file by pressing Ctrl + X and entering Y when prompted.

The final step in configuring Filebeat is to load the SIEM dashboards and pipelines into Elasticsearch using the filebeat setup command.

$ sudo filebeat setup

It may take a few minutes for the command to finish. Once finished, you should receive the following output.

Overwriting ILM policy is disabled. Set `setup.ilm.overwrite: true` for enabling. Index setup finished. Loading dashboards (Kibana must be running and reachable) Loaded dashboards Loaded Ingest pipelines

Start the Filebeat service.

$ sudo systemctl start filebeat

Check the status of the service.

$ sudo systemctl status filebeat

Step 11 - Accessing Kibana Dashboard

Since KIbana is configured to only access Elasticsearch via its private IP address, you have two options to access it. The first method is to use an SSH Tunnel to the Elastic search server from your PC. This will forward port 5601 from your PC to the server's private IP address, and you will be able to access Kibana from your PC at http://localhost:5601. But this method means you won't be able to access it from anywhere else.

The other option is to install Nginx on your Suricata server and use it as a reverse proxy to access Elasticsearch's server via its private IP address. We will discuss both ways. You can choose either way based on your requirements.

Using SSH Local Tunnel

If you are using Windows 10 or Windows 11, you can run the SSH LocalTunnel from your Windows Powershell. On Linux or macOS, you can use the terminal. You will probably need to configure SSH access if you haven't already.

Run the following command in your computer's terminal to create the SSH Tunnel.

$ ssh -L 5601:your_private_IP:5601 navjot@your_public_IP -N

- The

-Lflag refers to the local SSH Tunnel, which forwards traffic from your PC's port to the server. - The

private_IP:5601is the IP address where your traffic is forwarded to on the server. In this case, replace it with the private IP address of your Elasticsearch server. - The

your_public_IPis the public IP address of the Elasticsearch server, which is used to open an SSH connection. - The

-Nflag tells OpenSSH not to execute any command but to keep the connection alive as long as the tunnel runs.

Now that the tunnel is open, you can access Kibana by opening the URL http://localhost:5601 on your PC's browser. You will get the following screen.

You will need to keep the command running for as long as you need to access Kibana. Press Ctrl + C in your terminal to close the tunnel.

Using Nginx Reverse-proxy

This method is best suited if you want to access the dashboard from anywhere in the world.

Configure Firewall

Before proceeding further, you need to open HTTP and HTTPS ports in the firewall.

$ sudo ufw allow http $ sudo ufw allow https

Install Nginx

Debian 12 ships with an older version of Nginx. To install the latest version, you need to download the official Nginx repository.

Import Nginx's signing key.

$ curl https://nginx.org/keys/nginx_signing.key | gpg --dearmor \ | sudo tee /usr/share/keyrings/nginx-archive-keyring.gpg >/dev/null

Add the repository for Nginx's stable version.

$ echo "deb [signed-by=/usr/share/keyrings/nginx-archive-keyring.gpg] \

http://nginx.org/packages/debian `lsb_release -cs` nginx" \

| sudo tee /etc/apt/sources.list.d/nginx.list

Update the system repositories.

$ sudo apt update

Install Nginx.

$ sudo apt install nginx

Verify the installation. Since we are on Debian, the sudo in the command is essential.

$ sudo nginx -v nginx version: nginx/1.24.0

Start the Nginx server.

$ sudo systemctl start nginx

Install and configure SSL

The first step is to install the Let's Encrypt SSL Certificate. We need to install Certbot to generate the SSL certificate. You can either install Certbot using Debian's repository or grab the latest version using the Snapd tool. We will be using the Snapd version.

Debian 12 comes doesn't come with Snapd installed. Install Snapd package.

$ sudo apt install snapd

Run the following commands to ensure that your version of Snapd is up to date.

$ sudo snap install core && sudo snap refresh core

Install Certbot.

$ sudo snap install --classic certbot

Use the following command to ensure that the Certbot command can be run by creating a symbolic link to the /usr/bin directory.

$ sudo ln -s /snap/bin/certbot /usr/bin/certbot

Confirm Certbot's installation.

$ certbot --version certbot 2.7.0

Generate the SSL certificate for the domain kibana.example.com.

$ sudo certbot certonly --nginx --agree-tos --no-eff-email --staple-ocsp --preferred-challenges http -m [email protected] -d kibana.example.com

The above command will download a certificate to the /etc/letsencrypt/live/kibana.example.com directory on your server.

Generate a Diffie-Hellman group certificate.

$ sudo openssl dhparam -dsaparam -out /etc/ssl/certs/dhparam.pem 4096

To check whether the SSL renewal is working fine, do a dry run of the process.

$ sudo certbot renew --dry-run

If you see no errors, you are all set. Your certificate will renew automatically.

Configure Nginx

Create and open the Nginx configuration file for Kibana.

$ sudo nano /etc/nginx/conf.d/kibana.conf

Paste the following code in it. Replace the IP address with the private IP address of your Elasticsearch server.

server {

listen 80; listen [::]:80;

server_name kibana.example.com;

return 301 https://$host$request_uri;

}

server {

server_name kibana.example.com;

charset utf-8;

listen 443 ssl http2;

listen [::]:443 ssl http2;

access_log /var/log/nginx/kibana.access.log;

error_log /var/log/nginx/kibana.error.log;

ssl_certificate /etc/letsencrypt/live/kibana.example.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/kibana.example.com/privkey.pem;

ssl_trusted_certificate /etc/letsencrypt/live/kibana.example.com/chain.pem;

ssl_session_timeout 1d;

ssl_session_cache shared:MozSSL:10m;

ssl_session_tickets off;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384;

resolver 8.8.8.8;

ssl_stapling on;

ssl_stapling_verify on;

ssl_dhparam /etc/ssl/certs/dhparam.pem;

location / {

proxy_pass http://your_private_IP:5601;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}

Save the file by pressing Ctrl + X and entering Y when prompted.

Open the file /etc/nginx/nginx.conf for editing.

$ sudo nano /etc/nginx/nginx.conf

Add the following line before the line include /etc/nginx/conf.d/*.conf;.

server_names_hash_bucket_size 64;

Save the file by pressing Ctrl + X and entering Y when prompted.

Verify the configuration.

$ sudo nginx -t nginx: the configuration file /etc/nginx/nginx.conf syntax is ok nginx: configuration file /etc/nginx/nginx.conf test is successful

Restart the Nginx service.

$ sudo systemctl restart nginx

Your Kibana dashboard should be accessible via the URL https://kibana.example.com from anywhere you want.

Step 12 - Managing Kibana Dashboards

Before proceeding further with managing the dashboards, you need to add the base URL field in Kibana's configuration.

Open Kibana's configuration file.

$ sudo nano /etc/kibana/kibana.yml

Find the commented line #server.publicBaseUrl: "" and change it as follows by removing the hash in front of it.

server.publicBaseUrl: "https://kibana.example.com"

Save the file by pressing Ctrl + X and entering Y when prompted.

Restart the Kibana service.

$ sudo systemctl restart kibana

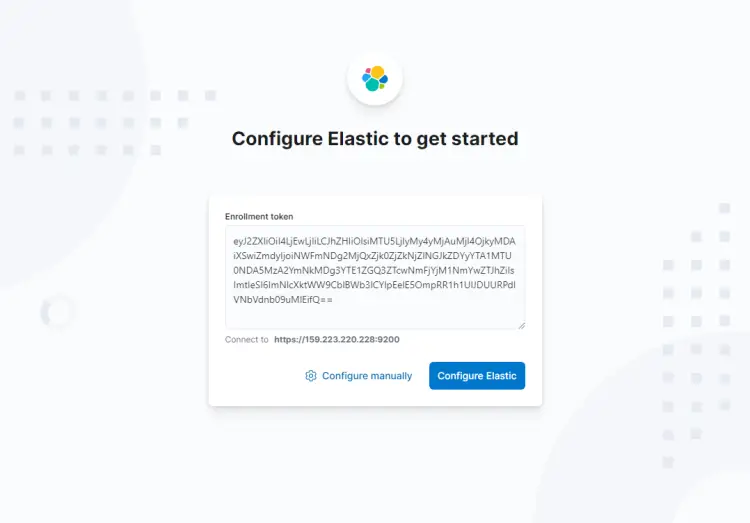

Wait for a few minutes and load the URL https://kibana.example.com in your browser. You will get the enrollment token field. Fill in the enrollment token you generated in step 9.

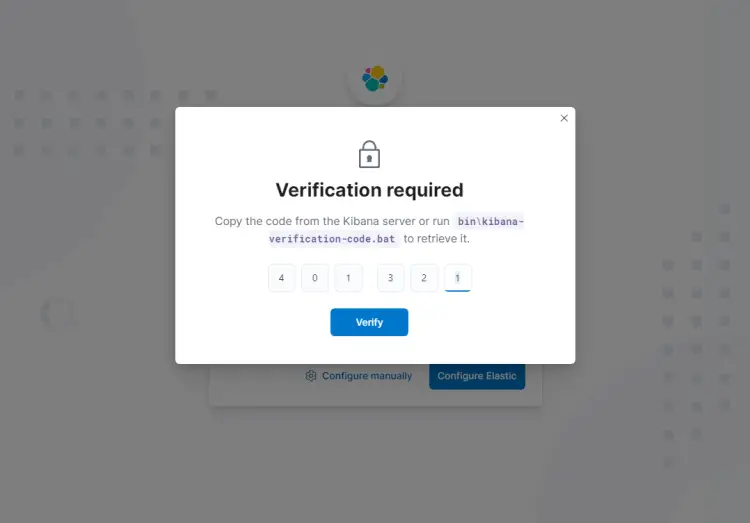

Click the Configure Elastic button to proceed. Next, you will be asked for the verification code.

Switch back to the Elasticsearch terminal and run the following command to generate the code. Enter this code on the page and click the Verify button to proceed.

$ sudo /usr/local/share/kibana/bin/kibana-verification-code

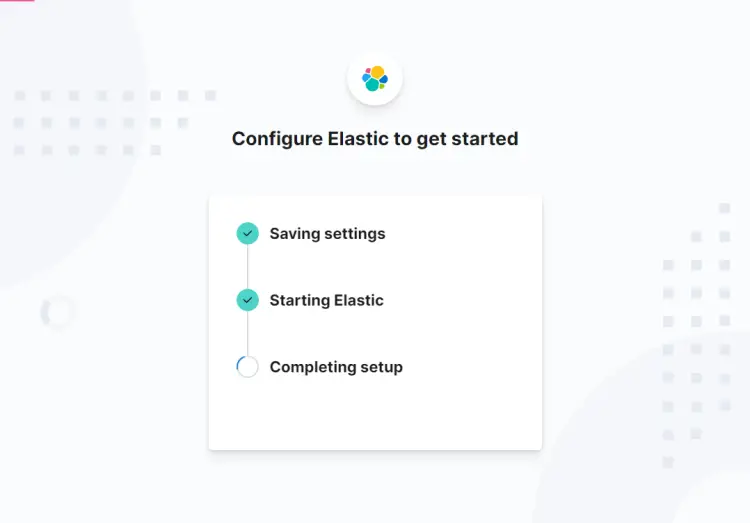

Next, wait for the Elastic setup to complete. It will take several minutes.

Next, you will be redirected to the login screen.

Log in with the username elastic and the password you generated before and you will get the following screen.

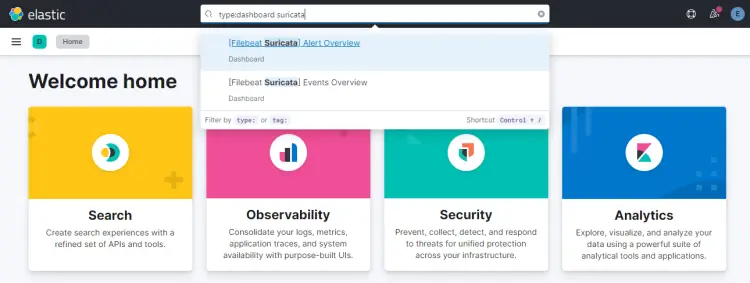

Type type:data suricata in the search box at the top to locate Suricata's information.

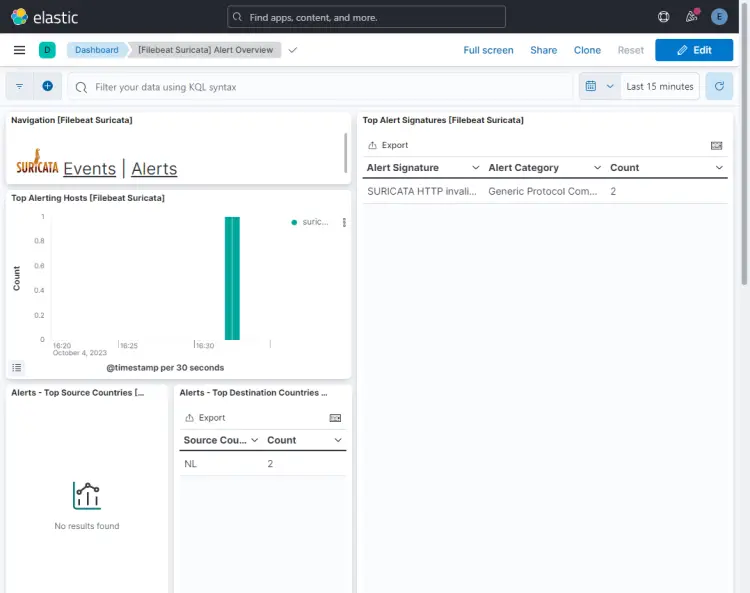

Click the first result ([Filebeat Suricata] Alert Overview), and you will get a screen similar to the following. By default, it shows the entries for only the last 15 minutes, but we are displaying it over a larger timespan to show more data for the tutorial.

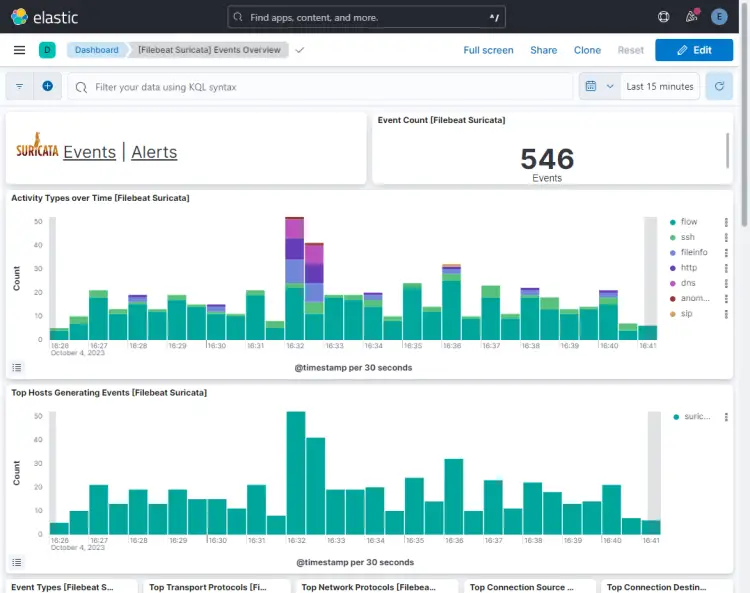

Click on the Events button to view all the logged events.

On scrolling down on events and alert pages, you can identify each event and alert by the type of protocol, the source and destination ports, and the IP address of the source. You can also view the countries from where the traffic originated.

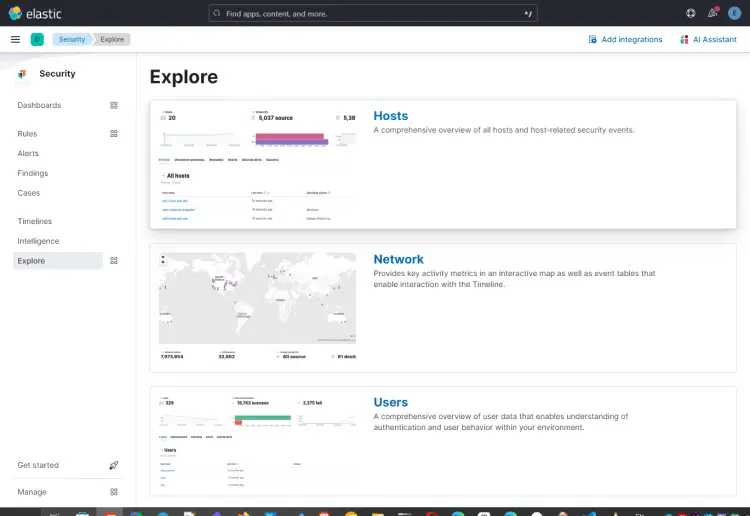

You can use Kibana and Filebeat to access and generate other types of dashboards. One of the useful in-built dashboards that you can use right away is the Security dashboard. Click on the Security >> Explore menu from the left hamburger menu.

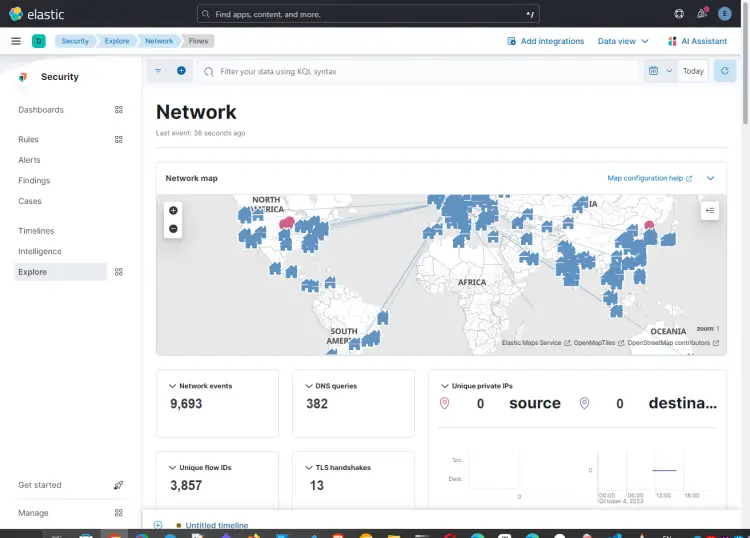

On the next page, select the Network option to open the associated dashboard.

Clicking the Network option will get you the following screen.

You can add more dashboards like Nginx by enabling and configuring in-built Filebeat modules.

Conclusion

This concludes the tutorial for installing and configuring Suricata IDS with Elastic Stack on a Debian 12 server. You also configured Nginx as a reverse proxy to access Kibana dashboards externally. If you have any questions, post them in the comments below.