High-Availability Storage with GlusterFS on Ubuntu 18.04 LTS

This tutorial exists for these OS versions

- Ubuntu 22.04 (Jammy Jellyfish)

- Ubuntu 18.04 (Bionic Beaver)

- Ubuntu 18.04 (Bionic Beaver)

- Ubuntu 12.10 (Quantal Quetzal)

- Ubuntu 12.04 LTS (Precise Pangolin)

- Ubuntu 11.10 (Oneiric Ocelot)

On this page

Glusterfs is a scalable network filesystem with capabilities of scaling to several petabytes and handling thousands of clients. It's an open source and distributed file system that sets disk storage resources from multiple servers into a single namespace. It's suitable for data-intensive tasks such as cloud storage and data media streaming.

In this tutorial, I will show how to set up a high availability storage server with GlusterFS on Ubuntu 18.04 LTS (Bionic Beaver). We will use 3 ubuntu servers, 1 server as a client, and 2 others as a storage. Each storage server will be a mirror of the other, and files will be replicated across both storage servers.

Prerequisites

- 3 Ubuntu 18.04 Servers

- 10.0.15.10 - gfs01

- 10.0.15.11 - gfs02

- 10.0.15.12 - client01

- Root Privileges

What we will do?

- GlusterFS Pre-Installation

- Install GlusterFS Server

- Configure GlusterFS Servers

- Setup GlusterFS Client

- Testing Replicate/Mirroring

Step 1 - GlusterFS Pre-Installation

The first step we need to do before installing glusterfs on all servers is configuring the hosts' file and add GlusterFS repository to each server.

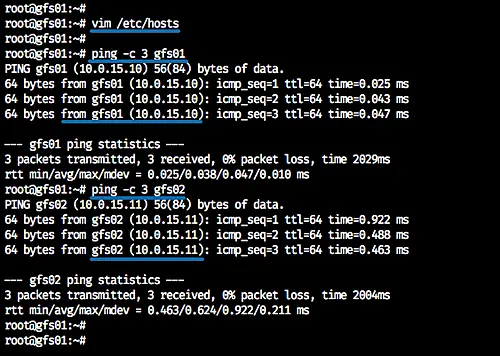

Configure Hosts File

Log in to each server and get the root access with 'sudo su' command, then edit the '/etc/hosts' file.

vim /etc/hosts

Paste hosts configuration below.

10.0.15.10 gfs01 10.0.15.11 gfs02 10.0.15.12 client01

Save and exit.

Now ping each server using the hostname as below.

ping -c 3 gfs01

ping -c 3 gfs02

ping -c 3 client01

Each hostname will resolve to each server IP address.

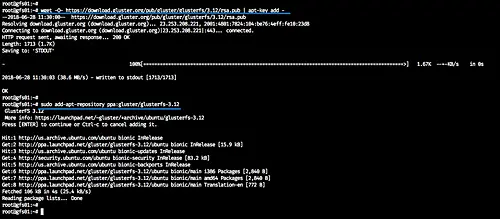

Add GlusterFS Repository

Install the software-properties-common package to the system.

sudo apt install software-properties-common -y

Add the glusterfs key and repository by running commands below.

wget -O- https://download.gluster.org/pub/gluster/glusterfs/3.12/rsa.pub | apt-key add -

sudo add-apt-repository ppa:gluster/glusterfs-3.12

The command will update all repositories. And we've already added the glusterfs repository to all systems.

Step 2 - Install GlusterFS Server

In this step, we will install the glusterfs server on 'gfs01' and 'gfs02' servers.

Install glusterfs-server using the apt command.

sudo apt install glusterfs-server -y

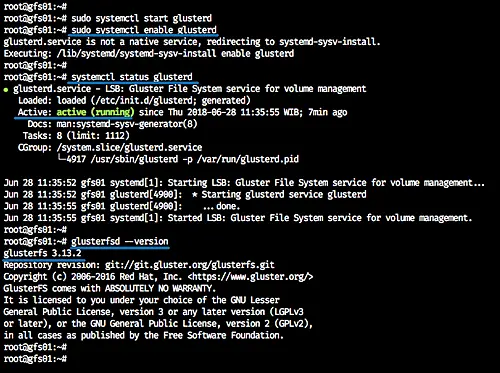

Now start the glusterd service and enable it to launch everytime at system boot.

sudo systemctl start glusterd

sudo systemctl enable glusterd

Glusterfs server is now up and running on the 'gfs01' and 'gfs02' servers.

Check the services and the installed software version.

systemctl status glusterd

glusterfsd --version

Step 3 - Configure GlusterFS Servers

Glusterd services are now up and running, and the next step we will do is to configure those servers by creating a trusted storage pool and creating the distributed glusterfs volume.

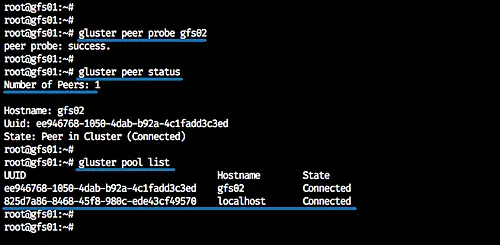

Create a Trusted Storage Pool

From the 'gfs01' server, we need to add the 'gfs02' server to the glusterfs storage pool.

Run the command below.

gluster peer probe gfs02

Now we will see the result 'peer probe: success', and we've added the 'gfs02' server to the storage trusted pool.

Check the storage pool status and list using commands below.

gluster peer status

gluster pool list

And you will see the 'gfs02' server is connected to the peer cluster, and it's on the pool list.

Setup Distributed GlusterFS Volume

After creating the trusted storage pool, we will create a new distributed glusterfs volume. We will create the new glusterfs volume based on the system directory.

Note:

- For the server production, it's recommended to create the glusterfs volume using the different partition, not using a system directory.

Create a new directory '/glusterfs/distributed' on each bot 'gfs01' and 'gfs02' servers.

mkdir -p /glusterfs/distributed

And from the 'gfs01' server, create the distributed glusterfs volume named 'vol01' with 2 replicas 'gfs01' and 'gfs02'.

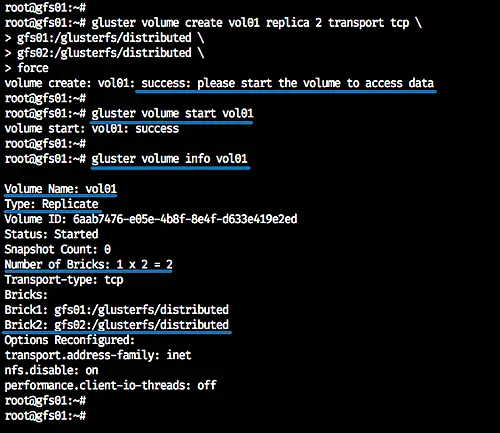

gluster volume create vol01 replica 2 transport tcp \

gfs01:/glusterfs/distributed \

gfs02:/glusterfs/distributed \

force

Now we've created the distributed volume 'vol01' - start the 'vol01' and check the volume info.

gluster volume start vol01

gluster volume info vol01

And following is the result.

At this stage, we created the 'vol01' volume with the type 'Replicate' and 2 bricks on 'gfs01' and 'gfs02' server. All data will be distributed automatically to each replica server, and we're ready to mount the volume.

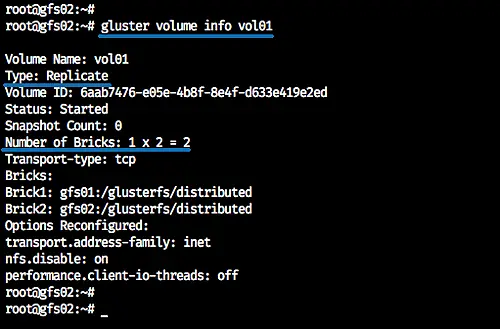

Below the 'vol01' volume info from the 'gfs02' server.

Step 4 - Setup GlusterFS Client

In this step, we will mount the glusterfs volume 'vol01' to the Ubuntu client, and we need to install the glusterfs-client to the client server.

Install glusterfs-client to the Ubuntu system using the apt command.

sudo apt install glusterfs-client -y

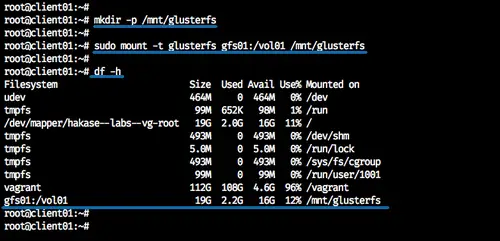

Now create a new directory '/mnt/glusterfs' when the glusterfs-client installation is complete.

mkdir -p /mnt/glusterfs

And mount the distributed glusterfs volume 'vol01' to the '/mnt/glusterfs' directory.

sudo mount -t glusterfs gfs01:/vol01 /mnt/glusterfs

Now check the available volume on the system.

df -h /mnt/glusterfs

And we will get the glusterfs volume mounted to the '/mnt/glusterfs' directory.

Additional:

To mount glusterfs permanently to the Ubuntu client system, we can add the volume to the '/etc/fstab'.

Edit the '/etc/fstab' configuration file.

vim /etc/fstab

And paste configuration below.

gfs01:/vol01 /mnt/glusterfs glusterfs defaults,_netdev 0 0

Save and exit.

Now reboot the server and when it's online, we will get the glusterfs volume 'vol01' mounted automatically through the fstab.

Step 5 - Testing Replicate/Mirroring

In this step, we will test the data mirroring on each server nodes.

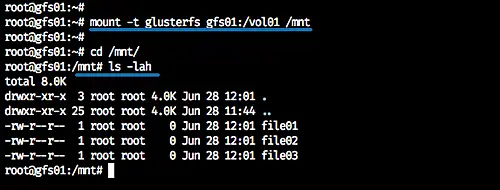

Mount the glusterfs volume 'vol01' to each glusterfs servers.

On 'gfs01' server.

mount -t glusterfs gfs01:/vol01 /mnt

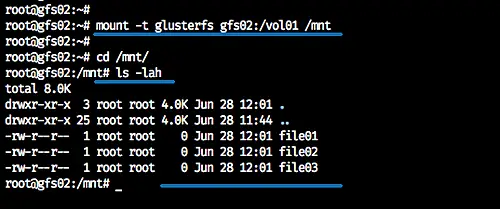

On 'gfs02' server.

mount -t glusterfs gfs02:/vol01 /mnt

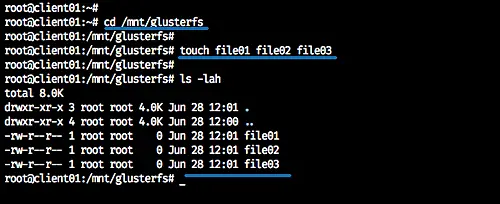

Now back to the Ubuntu client and go to the '/mnt/glusterfs' directory.

cd /mnt/glusterfs

Create some files using touch command.

touch file01 file02 file03

Now check on each - 'gfs01' and 'gfs02' - server, and we will get all the files that we've created from the client machine.

cd /mnt/

ls -lah

Here's the result from the 'gfs01' server.

And here's the result from the 'gfs02' server.

All files that we created from the client machine will be distributed to all the glusterfs volume node servers.